The irony landed with almost theatrical timing. In mid-April 2026, South Africa’s Department of Communications and Digital Technologies released an 86-page Draft National Artificial Intelligence Policy for public comment. The document set out bold plans. These included new oversight bodies such as a National AI Commission, an ethics board, a dedicated regulatory authority, and an AI insurance superfund. It spoke of tax incentives, skills programmes, and positioning the country as an AI leader across Africa, all while addressing inequality and digital divides.

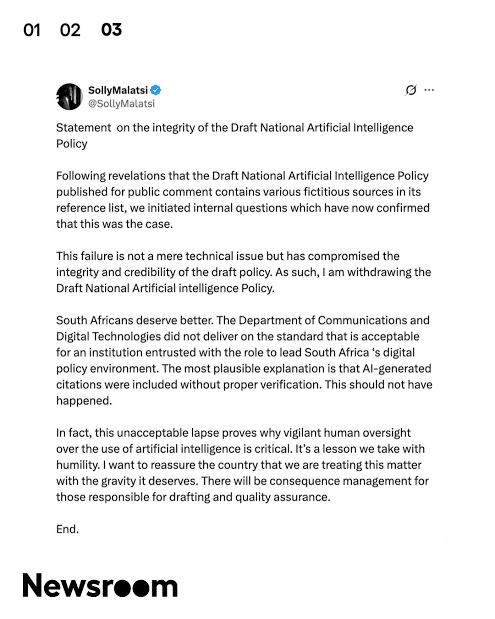

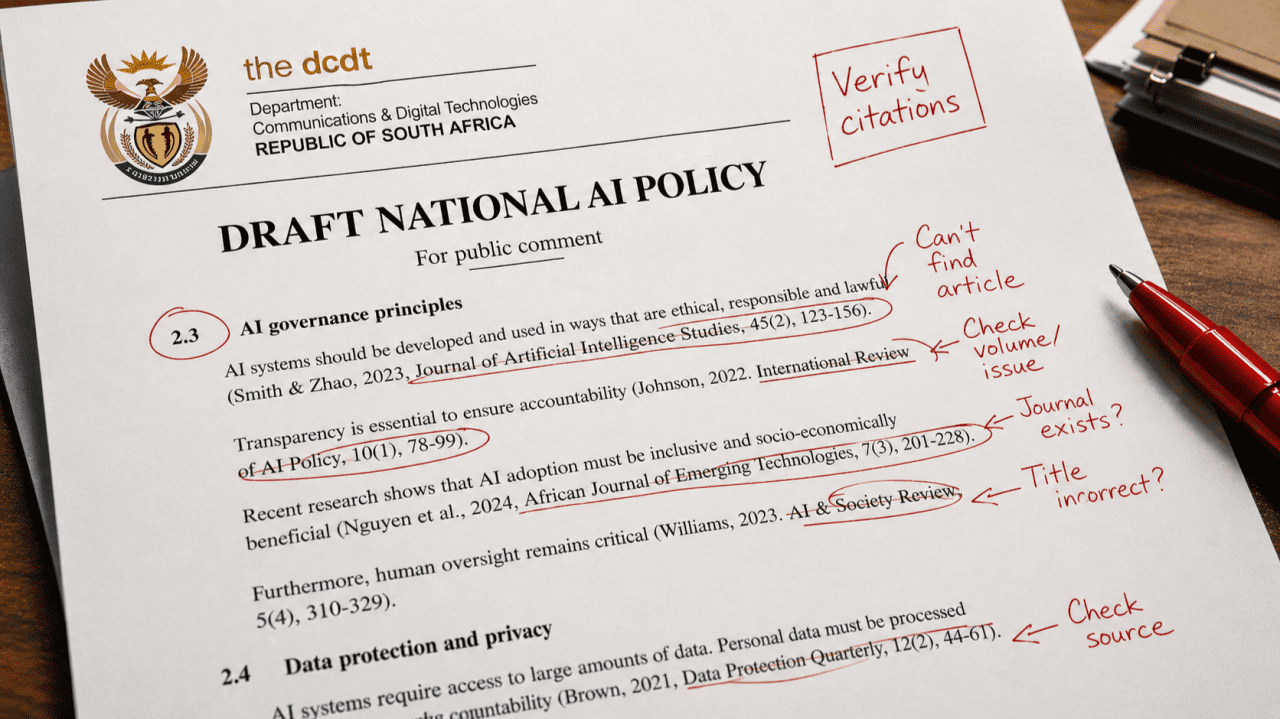

Barely two weeks later, the whole thing was quietly withdrawn. Minister Solly Malatsi acknowledged that several academic references in the policy, at least six, possibly more, were completely made up. Journals that didn’t exist.

Papers never written. Authors who appeared to be inventions. News24’s investigation triggered the collapse, and experts pointed straight to the most obvious culprit: AI hallucinations. The minister himself acknowledged that artificial intelligence tools likely generated the citations and inserted them without proper verification.

Instead of guiding the responsible use of AI, a national strategy became a textbook case of the consequences of relying too heavily on the technology and neglecting the rigorous verification process. The embarrassment was immediate for a government already navigating tough economic realities.

The road not taken: What if no one had noticed the AI hallucinations?

Had the fake references slipped through unnoticed, the consequences could have rippled far beyond red faces in Pretoria. Policymakers and stakeholders might have treated the document’s “evidence base” as solid ground for debates in Parliament, budget allocations, or negotiations with tech companies. Flawed ideas about risk categories, ethics frameworks, or innovation priorities, built on thin air, could have influenced actual laws and spending decisions for years.

In a country facing electricity shortages, high unemployment, and real infrastructure gaps, misdirected resources matter. Public trust in the government’s ability to manage complex technological issues may have suffered another setback. South Africa’s hope of shaping African AI conversations could have turned into awkward sidelong glances from international partners. Once bad policy embeds itself, unpicking it becomes slow, expensive, and politically messy. The painful withdrawal of the draft likely prevented larger issues in the future.

This is not an isolated mistake

South Africa’s misstep reflects a broader pattern. In September 2025, a major education reform report in Newfoundland and Labrador, Canada, ironically one that called for the “ethical” use of AI in schools, turned out to contain more than 15 fabricated sources. One cited a nonexistent National Film Board movie; another lifted a fake example straight from a university style guide meant only as a template. Academics described the errors as classic signs of unverified AI output.

The problem reaches deeper into academia. An analysis of NeurIPS 2025 papers found at least 100 hallucinated citations scattered across more than 50 published works. These had already passed peer review. A broader Nature investigation suggested that tens of thousands of scholarly publications from 2025 likely contain invalid AI-generated references, with computer science seeing a sharp rise, from around 0.3% of papers in 2024 to 2.6% in 2025.

Courts have felt the impact too. Lawyers in multiple countries, including high-profile U.S. firms like Sullivan & Cromwell, have faced sanctions, fines, or referrals to bar associations after submitting briefs with nonexistent case citations produced by generative tools. One database tracking these incidents has logged well over a thousand cases globally. Judges have grown visibly frustrated, noting that the time spent chasing phantom authorities distracts from the real merits of disputes.

Even a high-profile U.S. government-linked report on children’s health reportedly contained suspicious references that raised similar questions. The pattern is clear: convenience tempts people, overworked civil servants, researchers under pressure, consultants on tight deadlines, and overburdened students, to let AI handle the literature review and citation grunt work. When no one double-checks, the inventions slip through and start polluting the knowledge base.

Where this leads if left unchecked

If the habit continues to spread, the damage compounds quietly at first, then more visibly. Scientific literature risks turning into a hall of mirrors, where one fake citation gets referenced by another until tracing truth becomes exhausting. Policy decisions grounded in synthetic evidence could misfire, wasting public funds, delaying genuine progress, or creating rules that don’t fit real-world conditions.

In places like South Africa, where building strong local research capacity is already difficult, over-reliance on flawed AI output could widen capability gaps rather than close them. Public confidence erodes when official documents prove unreliable. Over time, it becomes harder to distinguish solid work from polished nonsense, weakening trust in institutions that depend on evidence.

The fix is not to abandon AI, as its ability to summarise, draft, and spot connections remains genuinely useful for everyday tasks and serious research, alike. The challenge is learning to use it without surrendering judgment. Retrieval-augmented systems that pull directly from verified databases rather than relying on a model’s memory help cut hallucinations. Most importantly, always cross-check citations against sources, run claims through academic databases, and treat AI as a fast first draft rather than a finished product. Institutions can require disclosure of AI assistance and build in multi-stage reviews.

For high-stakes work like national policy, governments should set the standard. Drafting teams need clear rules: AI can assist with structure or language, but every factual claim and reference must be verified by humans with domain knowledge. Investing in local tools trained on African data could reduce cultural or contextual slips. Journals and conferences are tightening screening, some now use automated citation validators alongside traditional peer review.

Universities can also shift teaching emphasis toward critical evaluation: showing students how to partner with AI intelligently while keeping full responsibility for the integrity of their research.

The South Africa episode stings, but it also offers a useful lesson. It reminds us that technology amplifies both our strengths and our shortcuts. When we treat AI as a tireless assistant rather than an infallible oracle and insist on rigorous human oversight, we stand a better chance of unlocking its benefits without undermining the foundations of knowledge and governance it is supposed to support.