Meta is enhancing its technology to identify teenagers across its platforms, including those who have intentionally provided false birthdates to circumvent protections, as the company increases its teen safety framework on Instagram, Facebook, and Messenger.

On May 5, 2026, the company announced a series of new improvements to its age assurance systems. These advancements integrate AI analysis, product restrictions, and parental tools, resulting in a more consistent and proactive approach to protecting young people online.

Meta has made a major update to improve how it detects underage users. The company’s systems now look at various signals like posts, comments, bios, and captions. They analyse these to find clues about a user’s age, such as mentions of school or age milestones. If these signals suggest that a user might be underage, Meta can ask for age verification, even if the account was created with an adult birthdate.

The company is introducing a new technology that can analyse photos and videos to estimate a person’s age range based on general visual cues. The tool does not rely on facial recognition or identify individuals. Instead, it looks at broad visual features and combines them with behaviour and text signals to improve accuracy in age detection.

Accounts suspected of belonging to underage users will need to verify their age. If verification fails, the accounts may be restricted or removed. Meta is also improving its systems to identify users who repeatedly try to bypass restrictions by creating new accounts after being removed.

Similar read: Meta signs multibillion-dollar deal to use Amazon chips for AI

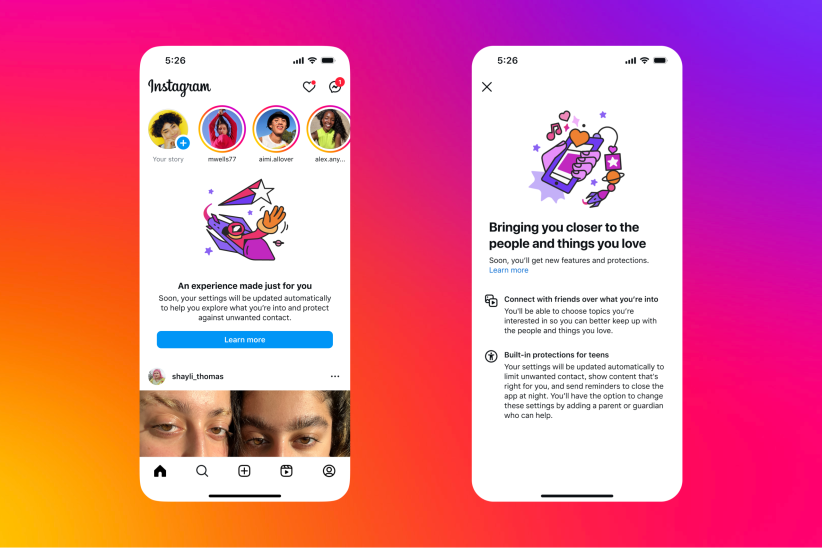

Meta reports that its Teen Accounts system has enrolled hundreds of millions of teenagers since its launch. This system automatically restricts experiences for users under 18, with a default content setting for ages 13 and up. This limits their exposure to sensitive content and lowers the chance of unwanted contact with strangers.

Parents get new tools, but Meta wants a bigger industry fix

In addition to the AI improvements, Meta is introducing new notifications for parents. These notifications will help parents confirm their teen’s age on the platform and start discussions about the importance of giving correct information online. These new features expand upon the resources already available in Meta’s Family Centre, which provides families with tools and advice for managing their digital experiences on its apps.

For users who try to change their listed age in ways that would move them out of teen protections, Meta applies a combination of government ID checks and facial age estimation tools to assess whether the request is legitimate.

Meta is advocating for a broader solution that goes beyond individual apps. The company believes age verification works best when managed at the operating system or app store level. This way, the same age information can be used across different apps, instead of each app creating its own separate verification system.

Meta says this approach would reduce fragmentation, improve consistency, and be more privacy-preserving than the current app-by-app model.

The announcements come as pressure on social media companies over teen safety continues to build globally, with regulators in the United States, United Kingdom, Australia, and Nigeria all moving toward stricter requirements for how platforms handle underage users.