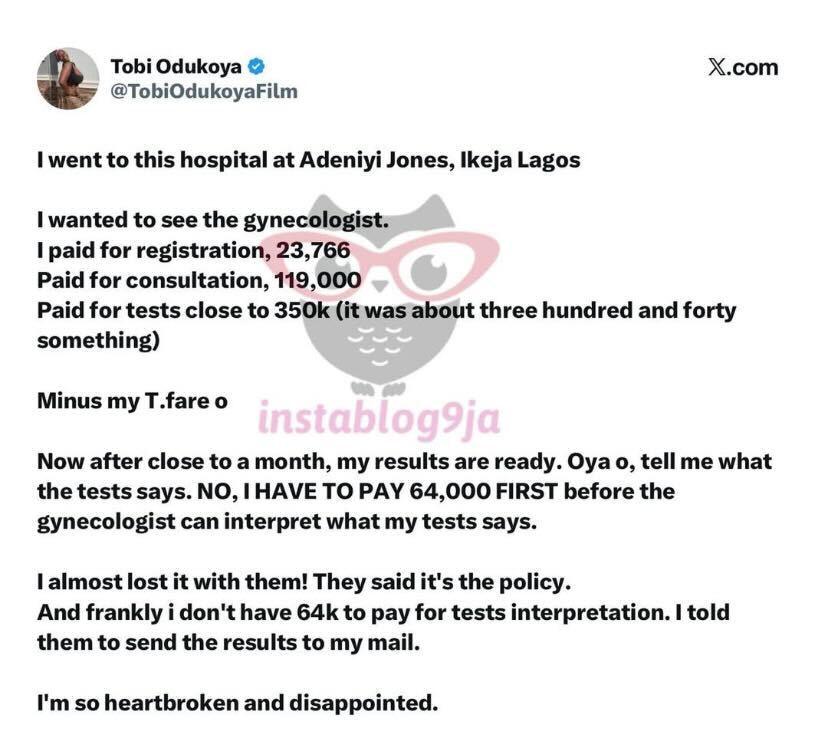

A viral post by marketer Tobi Odukoya has reignited conversations about the cost of healthcare in Nigeria and, more recently, sparked a parallel debate about whether artificial intelligence (AI) should step in to bridge healthcare access gaps.

In the post shared on X, Odukoya detailed her experience at a hospital in Adeniyi Jones, Ikeja, Lagos. After paying ₦23,766 for registration, ₦119,000 for consultation, and roughly ₦340,000 for tests, she expected clarity on her health status. Instead, she was told she needed to pay an additional ₦64,000 before a gynaecologist could interpret her results.

“Now, after close to a month, my results are ready. Oya o, tell me what the tests says. NO, I HAVE TO PAY 64,000 FIRST before the gynaecologist can interpret what my tests says,” she said.

Frustrated, she requested that the results be emailed to her instead, describing the experience as “heartbreaking and disappointing.”

The story quickly gained traction online, not just because it reflects rising healthcare costs, but also for the satirical responses it triggered.

One particularly pointed reaction captured the mood: “Lol… any AI app would interpret it perfectly for you. Screenshot and ask Gemini AI for interpretation. It will break it down…even better than their doctor. 64k…after collecting all that?!!“

Behind the humour lies a serious question: as healthcare becomes increasingly expensive and inaccessible, are Nigerians turning to AI tools like ChatGPT and Claude for medical guidance?

The rise of AI as a “doctor”

Globally, millions already are. According to OpenAI, more than 200 million people ask ChatGPT health and wellness questions weekly. But a new study published in BMJ Open suggests that this trend could come with significant risks.

Researchers from the US, Canada, and the UK tested five major AI platforms: ChatGPT, Gemini, Meta AI, Grok, DeepSeek, and Claude, asking them medical questions across categories like cancer, vaccines, nutrition, and stem cells.

The results were sobering.

Roughly 50% of responses were classified as problematic, with nearly 20% deemed highly problematic. While chatbots performed better on structured, closed-ended questions, their accuracy dropped significantly with open-ended prompts, the kind users are most likely to ask when describing symptoms.

Even more concerning was the tone of the responses. The study found that AI systems often delivered answers with high confidence, even when the information was incomplete or misleading. None of the platforms produced fully accurate or comprehensive references to back their claims.

In short, the bots sounded convincing, but weren’t always correct.

When access meets risk

In contexts like Nigeria, where healthcare costs can be prohibitive, as illustrated by Odukoya’s experience, the appeal of AI becomes obvious. Why pay tens of thousands of naira for interpretation when a chatbot can provide instant answers for free?

Unlike licensed medical professionals, AI chatbots lack clinical judgment. They cannot examine patients, interpret nuanced symptoms, or account for individual medical histories. At best, they offer generalised information; at worst, they risk amplifying misinformation.

The BMJ Open researchers warned that these systems can generate “authoritative-sounding but potentially flawed responses,” especially in areas requiring specialised expertise.

A gap technology can’t yet fill

The irony is hard to miss. On one hand, patients are being priced out of critical healthcare services, including something as fundamental as test interpretation. On the other hand, the alternative many are joking or even seriously considering is a tool that gets it wrong half the time.

It’s a gap that technology alone cannot fix.

While companies like OpenAI and Anthropic are rolling out healthcare-focused features for both users and clinicians, experts say public education and regulatory oversight remain crucial. Without them, AI risks becoming a shortcut that compromises patient safety rather than improving access.

The bigger picture

Odukoya’s experience is not an isolated incident; it reflects systemic issues in Nigeria’s healthcare system, where out-of-pocket spending remains high, and insurance coverage is limited.

The viral reactions it sparked, however, point to something new: a growing willingness to consider AI as part of the solution.

But until these systems can consistently deliver accurate, clinically sound advice, relying on them as substitutes for medical professionals is a gamble.

For now, the real challenge isn’t choosing between hospitals and chatbots; it’s fixing a system where patients don’t feel forced to make that choice in the first place.

See also: How AwaDoc is using WhatsApp and AI to transform healthcare across Africa