Ayoola Afolabi argues that artificial intelligence has transformed how financial institutions detect fraud, but that detection alone is not the same as protection, and the gap between the two is quietly eroding the trust that digital platforms depend on.

The alert arrives on your phone. Your bank has flagged a transaction. You wait. You call the support line and explain what happened, and then explain it again to a different agent. By the time the matter is resolved, the experience has left something behind, and it is not relief. It is doubt.

This is the central tension that Ayoola Afolabi, a UK-based fraud-prevention and financial technology professional, wants financial institutions to sit with. Fraud, he says, is no longer a simple or isolated problem.

Today’s attacks combine social engineering, behavioural manipulation, and technical vulnerabilities, often striking across multiple channels at once.

The industry’s response has been to reach for artificial intelligence, and AI has delivered, processing large volumes of data, identifying anomalies, and flagging risks within seconds. But Afolabi believes the conversation about what AI can do has moved far ahead of the conversation about what happens next.

“Detection is only one part of the story,” he says. “If you look at what happens after a transaction is flagged, the experience is often less seamless. You receive an alert. You wait. You reach out for support. You explain the situation more than once. In that moment, it doesn’t feel like a highly intelligent system. It feels like a disconnected one.”

Detection is not the same as protection

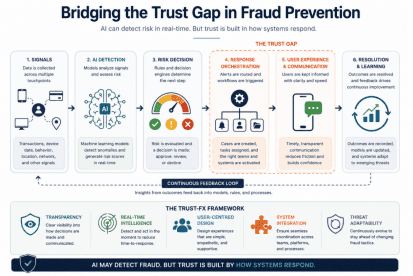

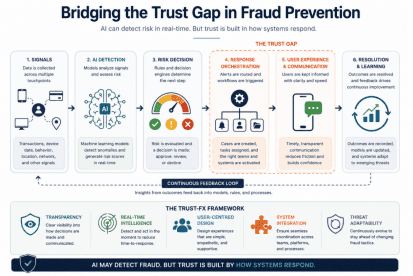

The distinction Afolabi draws is precise. AI-driven systems are built to recognise patterns and assign risk scores. They are optimised for accuracy, for identifying when something does not look right. But identifying a problem, he points out, is not the same as resolving it.

Once a signal is generated, it still needs to move through decision engines, operational workflows, and customer-facing processes. If those layers are not well aligned, the system creates friction rather than protection.

The most visible symptom is the false positive, the legitimate transaction incorrectly flagged, which stalls activity and chips away at the user’s confidence that the system is working for them rather than against them.

“For the user, what matters most is not that the system detected fraud,” Afolabi says. “It is how effectively it responds.”

Where trust begins to break down

Afolabi, who is currently a Fraud and Change Professional at Barclays UK, calls this the trust gap and places it squarely between detection and response. A system might correctly identify fraud, but if the response is slow, unclear, or inconsistent, confidence erodes quickly. That erosion does not stay contained to a single incident.

Over time, it shapes how people interact with digital platforms, not just in moments of failure, but in their overall willingness to rely on them.

The consequences go beyond individual frustration. Systems that struggle to respond effectively generate operational inefficiencies, increase customer friction, and introduce reputational risk. These determine how a platform scales and how it is perceived in a market where users have choices.

The framing Afolabi returns to is this: fraud prevention is often treated as a technical challenge, when it is equally a system design problem. Modern fraud operates across multiple touchpoints, and the response must be just as connected.

A framework built around the full system

This is the thinking behind the TRUST-FX Framework that Afolabi has developed, an approach that treats fraud as an end-to-end system rather than a series of isolated components. The framework organises around five interconnected principles:

- Transparency, meaning clear and timely communication

- Real-time intelligence, covering rapid detection and response

- User-centred design, building systems that people can navigate easily

- System integration, ensuring coordination across teams and platforms

- Threat adaptability, which demands continuous evolution as risks change.

Each principle has a standalone value.

Together, Afolabi argues, they determine how effectively a system can translate detection into meaningful outcomes. The framework does not position AI as peripheral. It positions AI as central to real-time intelligence, but contingent on everything around it.

Read alone: African fintechs must prioritise systemic trust over simple fraud detection – Ayoola Afolabi

“There is a growing tendency to view AI as the solution to fraud,” he says. “In reality, it is one part of a larger architecture. Without strong coordination, clear communication, and responsive processes, even advanced AI systems can fall short. Fraud does not just test detection capabilities. It tests how systems behave under pressure.”

The next phase

Looking ahead, Afolabi, who brings cross-market experience from Nigeria and the UK, sees trust becoming as important as capability.

As digital systems continue to expand and users grow more comfortable comparing experiences across platforms, the institutions that retain confidence will be those that can respond effectively, communicate clearly, and adapt continuously.

People expect systems to be not only intelligent but also reliable. When something goes wrong, they want clarity, speed, and reassurance, not complexity. For Afolabi, the implication for organisations building and scaling digital platforms is direct: thinking beyond detection is not optional. It is the work.

“Detection without response is incomplete,” he says. “Technology without trust is insufficient. Because in the end, trust is not built by what a system detects. It is built by how it responds.”